If you’ve ever wondered whether the challenges you’re facing at your historic site or house museum are typical or unusual, there is some collective evidence to draw on.

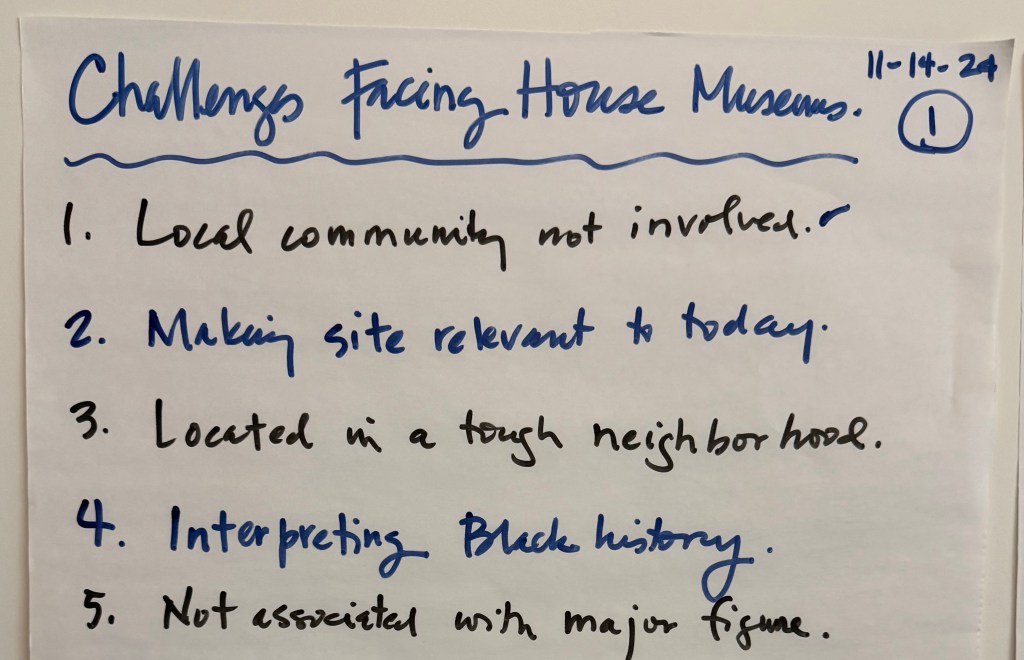

For the past decade, Ken Turino and I have led Reimagining Historic House Museums workshops across the country for the American Association for State and Local History, based on our book of the same name. We begin each workshop with a simple but revealing question: What is the greatest challenge facing your site?

We have collected these responses from a wide range of locations (such Chicago, Denver, New York, Philadelphia, Wisconsin, Texas, and Maryland) from a wide range of participants (staff, boardmembers, and volunteers). From a sampling of these lists, I used ChatGPT to analyze and synthesize the responses into the seven overarching issues, each representing a structural or strategic challenge. While the data was not collected in a scientific manner (participants are self-selecting, not random), the consistency of responses across regions and institution types is striking.

The most frequently cited—and arguably most consequential—is relevance and interpretive stagnation.

Participants consistently described static, unchanging tours; an inability to engage visitors; and historic sites that are dull and boring. They state that:

- the site feel stuffy

- it’s not interactive

- it doesn’t change

- there’s no reason to visit again

- tours are primarily anecdotal

- guides or leaders are unwilling to tell difficult or sensitive histories

- tourist trap offering no value

- it’s irrelevant, old, and decrepit.

This is not a failure of content. Most sites have compelling stories, significant collections, and deep knowledge of their history. So what’s the real challenge? It is a lack of interpretive strategy.

Many historic sites remain anchored in object-based interpretation or docent-led scripts that prioritize factual accuracy over audience meaning-making. While these approaches ensure correctness, they often fail to activate curiosity, emotional connection, or personal relevance. The consequences are predictable: low repeat visitation, weak engagement, and limited connection to contemporary issues.

At a deeper level, the problem is not that the stories are old—it is that the intended outcomes are unclear or unarticulated. Sites struggle to define what visitors should know, feel, or do as a result of their experience. Without this clarity, interpretation defaults to information delivery rather than purposeful engagement.

A useful corrective is a more intentional approach to interpretation—one that begins with outcomes, is organized through themes, and is delivered through purposeful design.

Start with outcomes. What should visitors know, feel, or do? This outcomes-based approach—aligned with practices promoted by Conny Graft in “Evaluation Is Not Just Nice, It Is Necessary” in the Reimagining book—forces clarity and provides a basis for evaluating success beyond attendance.

Then organize content through themes. Themes are not topics; they are ideas that give meaning to the material. Thematic interpretation, long advocated by the National Association for Interpretation, helps transform information into insight and provides coherence across the visitor experience. A terrific resource is Interpretation: Making a Difference on Purpose by Sam Ham.

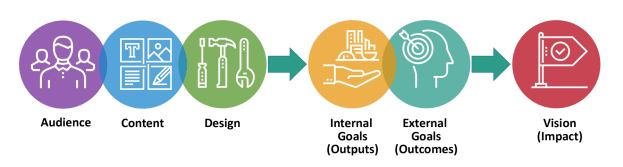

Finally, design experiences intentionally. Using an audience–content–design framework ensures that visitor needs are understood, content aligns with outcomes and themes, and formats—tours, exhibitions, or programs—are selected, organized, and delivered to create meaningful interactions rather than simply convey information. This is the purpose of interpretive planning.

Taken together, this approach shifts interpretation from coverage to purpose, from information delivery to experience design.

If your tours, exhibitions, or programs haven’t changed in years, it’s unlikely your outcomes have either. And if you can’t clearly state what visitors should know, feel, or do, then interpretation is being driven by habit rather than purpose. The question isn’t whether your stories are compelling—it’s whether you’re using them to create meaningful change for your audiences today.

This is only one of the seven recurring challenges we’ve identified. In upcoming posts, I’ll explore the others—and what they reveal about the current state and future direction of historic sites and house museums.

I was all on board for seeing the results of this report until I saw you’d used ChatGPT to compile results. This is lazy and inexcusable on such a small sample. You should be ashamed of yourself. You may think that using AI to analyze the results gives it a sheen of reliability but in fact it has the opposite effect; I now distrust everything you have to say.

LikeLike

Thank you for sharing your concerns. I understand why the use of AI in research and analysis raises important questions about reliability, judgment, and transparency.

I agree that a small sample is inherently limited. My intent was not to present these results as definitive or generalizable, but as exploratory and hypothesis-generating. Just to clarify, I examined comments from seven workshops, each of which included a couple dozen participant comments. That still does not make the sample representative of the field, but it does provide enough material to look for recurring patterns, questions, and possible areas for further inquiry.

I also want to be clear about how I used ChatGPT. I did not outsource judgment or interpretation to AI. I used it as a tool to help organize comments and identify possible themes, while I remained the “human in the loop”—reviewing the material, checking the patterns, applying my own professional judgment, and deciding what conclusions were appropriate. The interpretations and conclusions are mine, not ChatGPT’s. That is the same basic approach I use with other tools. Excel can sort data, calculate totals, and reveal patterns, but I still need to decide whether the results make sense.

I mentioned ChatGPT precisely because I did not want to hide my process. As we all continue to learn how generative AI should and should not be used in professional work, I believe transparency is essential: what tools were used, for what purpose, and with what safeguards. That openness may not resolve every concern, but it gives us a better basis for discussion, critique, and improvement. It is the same approach I try to bring to historical interpretation. We should be clear about our sources, methods, assumptions, and limits so that others can better understand, question, and evaluate the conclusions we draw.

I will continue to experiment with AI because its implications for our work are significant and still unfolding. Like many consequential technologies—from smartphones and computers to automobiles and gunpowder—it brings benefits, risks, tradeoffs, and unintended consequences. The challenge is not simply to accept or reject it, but to understand it carefully, use it responsibly, and remain alert to where it helps and where it harms. I’ll continue sharing what I learn on this blog in that spirit: openly, critically, and with the hope that we can learn from one another as the field develops better practices (see my previous post on “Using AI on a 500-Year-Old Page Changes Where Curatorial Expertise Begins”).

That said, your comment is a useful reminder that even limited use of AI can affect how readers perceive the credibility of a report. That skepticism is valuable. As historians, we should bring a critical eye to everything we read, hear, or watch, including work that uses new tools and technologies. I appreciate you taking the time to read the post carefully and to raise the issue directly.

LikeLike

Scrolled down to leave essentially the same comment as @ Holly M, and that reaction is not ameliorated in the least by a 5 paragraph response which I expect was also ChatGPTed.

LikeLike

It sounds like you’ve reach a firm conclusion about AI, but for others who are following along and want to learn more, I’ve found these resources to be useful:

Mollick, Ethan. Co-Intelligence: Living and Working with AI. Portfolio/Penguin, 2024. This best-selling book is a solid introduction to artificial intelligence and provide sufficient behind-the-scenes details to explain what it is and isn’t, and then describes its potential benefits and harms in creativity, work, and education. One of his major observations: “We can’t wait for decisions [about AI] to be made for us, and the world is advancing too fast to remain passive.” Mollick is a professor at the University of Pennsylvania and co-director of the Wharton School’s Generative AI Labs.

Khan, Salman. Brave New Words: How AI Will Revolutionize Education (and Why That’s a Good Thing). Viking, 2024. The title clearly reveals Khan’s slightly Pollyannish opinion that AI offers a way to “teaching everything to everyone” and that “the best ideas will come not from the AI creating for us but when the AI is creating and riffing with us.” Because museums and historic sites play an important role in education, his perspective provides a deep dive into the possibilities and harms of AI, including bias and misinformation. Khan is the founder of Khan Academy and he argues that AI is providing a new opportunity for education that could provide the kind of personalized learning that students desperately need to succeed in school and in their careers. As a demonstration, he developed Khanmigo, an AI teaching assistant, to provide a personal learning resource for K-12 and college students.

Newport, Cal. His podcast “Deep Questions with Cal Newport” (also available as a vodcast on YouTube) provides weekly updates on the latest research on AI. It can be a bit jargon-laden at times, but he’s a reliable and thoughtful source. Newport is a professor of computer science at Georgetown University but is better known for his book, Deep Work: Rules for Focused Success in a Distracted World (2016), although I prefer is earlier book, So Good They Can’t Ignore You (2012).

Chirikov, Igor. How Instructors Regulate AI in College: Evidence from 31,000 Course Syllabi. CSHE Higher Education Working Paper Series, vol. 26-1. Berkeley: Center for Studies in Higher Education, University of California, Berkeley, 2026. Chirikov’s research of syllabi at a large public research university shows that professors have become more permissive in the use of AI but they differentiate among its potential uses. AI is now most commonly allowed for editing and study, but not for drafting and reasoning. Today’s college students are learning in a world shaped by AI and they will soon be leading the museum field. Are you ready?

Lubar, Steven. “AI for Local History Organizations.” Technical Leaflet no. 308. History News 79, no. 4 (2024): 1-8. Lubar suggests several promising uses for AI in small museums and historical societies, including research, collections access, interpretation, public outreach, and tool-building. The use of AI in museums must be guided by ethics and human expertise, so he provides some best practices and principles for adoption. Lubar is a professor emeritus at Brown University and a former curator at the Smithsonian Institution.

And no, this wasn’t prepared with AI, although I did use spellcheck and confirmed details using Wikipedia. Remember when the value and accuracy of Wikipedia was widely debated? That history is a useful reminder that new information tools often move from suspicion to everyday use, while still requiring judgment.

LikeLike